Strategic Equilibrium in Digital Acquisition: A Comparative Analysis of SnipeSearch AdClicks and Google Ads in High-Value Financial Verticals

The digital advertising landscape of 2026 represents a departure from the historical reliance on centralized, single-platform dominance. For enterprises operating within specialized sectors, specifically offshore corporate services, international banking, and trust formations, the cost of customer acquisition has scaled at a rate that frequently outpaces the efficiency of traditional “black box” algorithmic bidding.

During the performance window of March and April 2026, a comparative study was conducted for an offshore service provider, to evaluate the efficacy of SnipeSearch AdClicks as a high-precision alternative to Google Ads. This analysis provides the first recorded instance where a specialized campaign on SnipeSearch outstripped Google Ads in key performance indicators (KPIs), offering a blueprint for a resilient, two-tier traffic acquisition model that prioritizes human intent over raw volume.

The Performance Paradox: Dissecting the March–April 2026 Campaign

The foundation of this investigation is a 30-day cross-platform campaign run directly by the SnipeSearch AdClicks team. Our objective was to generate qualified traffic for company formation and international banking services, a highly competitive niche where cost-per-click rates are traditionally elevated and traffic quality is critical. While Google Ads has long been the default channel in this sector, rising acquisition costs and persistent concerns around invalid traffic led us at SnipeSearch to test our own AdClicks platform alongside it as part of a transparent comparative campaign.

The results from that 30-day period highlighted a clear change in the economics of search advertising. Both platforms delivered almost identical click volumes, yet the spend required to achieve those outcomes was dramatically different. This reinforced what we have long believed at SnipeSearch: traffic volume alone is not the most meaningful metric, efficiency, transparency, and verified visitor quality matter just as much.

| Metric | SnipeSearch AdClicks | Google Ads |

|---|---|---|

| Impressions | 11,557 | 12,578 |

| Total Clicks | 545 | 539 |

| Total Cost | £52 | £298 |

| Average CPC | £0.10 | £0.55 |

| CPM (Cost Per Mille) | £4.50 | £23.65 |

| Estimated Human Clicks | ~540 | ~377 |

| Non-Human / Invalid Traffic | <1% | ~30% |

| Effective Cost per Human Click | ~£0.10 | ~£0.79 |

The financial implications of these metrics are profound. SnipeSearch delivered equivalent traffic for £52, representing a monthly saving of £246 compared to Google Ads. When extrapolated annually, the cost-benefit analysis suggests a potential recovery of £2,952 in ad spend, which, for a small to mid-sized boutique firm this represents a significant reallocation of capital toward innovation or market expansion. The effective cost per human click (eCPHC) serves as the definitive metric for efficiency here, calculated as follows:

eCPHC=Total Clicks×(1−IVT Rate)Total Spend

Applying this formula to the recorded data, the effective cost for SnipeSearch remains approximately £0.10 due to the negligible invalid traffic rate, whereas the Google Ads effective cost rises to £0.79 after adjusting for the 30% invalid traffic identified through secondary forensic tools.

Geographic Integrity and Commercial Intent Alignment

The discrepancy in traffic quality is most visible when analyzing the geographic distribution of visitors. In the offshore corporate services industry, the value of a click is intrinsically linked to the jurisdiction of the user. High-value jurisdictions typically include financial hubs or regions with established demand for international structuring, such as the United States, United Kingdom, United Arab Emirates, and various European financial centers.

The SnipeSearch campaign demonstrated a high degree of geographic alignment with the client’s core business model, which specializes in jurisdictions like the British Virgin Islands (BVI), Seychelles, Belize, and the Ras Al Khaimah (RAK) free zone.

| Rank | SnipeSearch Top Countries (Share %) | Google Ads Top Countries (Share %) |

|---|---|---|

| 1 | United States (21.3%) | Lesotho (14.2%) |

| 2 | France (12.1%) | Dominican Republic (12.2%) |

| 3 | Vietnam (8.5%) | Türkiye (7.1%) |

| 4 | Malta (7.8%) | South Africa (6.9%) |

| 5 | Canada (6.4%) | Saudi Arabia (4.7%) |

| 6 | Gibraltar (5.7%) | Uzbekistan (2.9%) |

| 7 | United Kingdom (5.0%) | United Kingdom (2.7%) |

| 8 | Switzerland (3.5%) | Yemen (2.5%) |

| 9 | Romania (2.8%) | Ukraine (2.2%) |

| 10 | UAE (2.8%) | Solomon Islands (2.0%) |

The geographic data for Google Ads shows a concerning concentration in lower-cost emerging markets and regions with minimal historical interest in premium offshore corporate structuring. Lesotho, for instance, accounted for 14.2% of the total click volume at an unusually high click-through rate (CTR) of 45.88%. Similarly, the Dominican Republic and Türkiye collectively accounted for nearly 20% of the volume, often originating from regions associated with scale buying rather than genuine commercial intent.

The “Why” behind this shift is often found in the automated nature of modern ad platforms. Google’s algorithm naturally prioritizes the path of least resistance to fulfill click quotas. If a campaign is not strictly constrained by “Presence Only” location settings, the system will gravitate toward cheaper, high-CTR regions to meet the daily budget targets. This creates an illusion of high engagement that masks a lack of conversion potential. SnipeSearch, by contrast, delivered traffic from regions with established financial infrastructure, such as Gibraltar, Malta, Switzerland, and the UAE, jurisdictions that directly correlate with the client’s service offerings.

The Technology of Transparency: Forensic Identification of Bot Traffic

The primary challenge for digital advertisers in 2026 is the identification and mitigation of Sophisticated Invalid Traffic (SIVT). The March–April campaign utilized a multi-layered verification stack involving CAPTCHAs, honeypots, site logs, Statcounter data, and Cloudflare analytics to isolate human intent from automated noise.

The Role of Anti-Spam Honeypots

Honeypots were implemented as the first line of defense. These represent “traps” designed to lure bots into revealing their identities by interacting with elements that are invisible to human users. By adding a hidden form field, the system can identify automated scripts that scan the HTML source code and fill in all available fields.

In the context of the offshore services site, form submissions that included data in these hidden fields were used to flag and exclude the originating IP addresses. The data suggests that while these simple traps are effective against unsophisticated scrapers, the high-CPC finance niche often attracts more advanced bots capable of rendering JavaScript and bypassing standard CSS-hidden elements.

Frictionless Verification and CAPTCHA Strategy

To protect high-value conversion points, such as the “Form an Offshore Company” funnel, a hybrid CAPTCHA strategy was employed. Modern bot detection recognizes that traditional CAPTCHAs can disrupt the user journey and drive abandonment. Consequently, the campaign utilized “frictionless” challenges, including Proof-of-Work (PoW) mechanisms.

Under this model, the visitor’s device performs a background cryptographic calculation that requires no human interaction. If the request originates from a botnet, where millions of requests are processed simultaneously, the cumulative computational cost of these tasks becomes prohibitive for the attacker, effectively rate-limiting the fraud attempt. This transition from visual puzzles to technical barriers was critical in maintaining the 540 estimated human clicks on the SnipeSearch platform.

Statcounter and the Analysis of “Paid Traffic” Patterns

Statcounter data provided a granular look at individual visitor paths that aggregate analytics often miss. The “Paid Traffic Report” in Statcounter was instrumental in identifying IP addresses with repetitive ad-clicking behavior from non-target ISPs. By monitoring the “Average Visit Time,” the team identified a cluster of traffic from Google Ads where session durations were consistently under five seconds.

The relationship between visit length and click validity is a key indicator of intent:

- Human Behavior: Typically involves scrolling, reading content, and multi-page navigation.

- Bot Behavior: Often characterized by “land and bounce” patterns or “pixel-perfect” mouse movements that lack the human timing jitter.

Cloudflare Bot Management and Security Heuristics

Cloudflare’s security stack provided the third tier of verification. Cloudflare Bot Management uses machine learning, behavioral analysis, and fingerprinting to assign a “Bot Score” (1–99) to every request.

- Score 1: Definitely automated.

- Scores 2-29: Likely automated (scrapers, credential stuffing tools).

- Scores 30-99: Likely human.

The Cloudflare analytics for SnipeSearch traffic consistently showed scores in the 30–99 range, confirming the high human-to-bot ratio. Furthermore, Cloudflare’s “Super Bot Fight Mode” were used to challenge traffic originating from known data centers or anonymous proxies, sources that are frequently used to mask click farm activity in the finance niche.

The “How” and “Why” of SnipeSearch’s Performance

The central question remains: how did SnipeSearch outstrip Google Ads in the first month of this trial? The answer lies in the fundamental difference between a broad-market “black box” and a niche-focused search ecosystem.

Intent-Based Precision vs. Algorithmic Fulfillment

Google Ads operates on an “auto-optimization” loop. When a campaign uses Smart Bidding (such as Target CPA), the algorithm learns from signals. If a bot successfully triggers a “soft” conversion (like a page view or a PDF download), the algorithm is “poisoned.” It begins to bid more aggressively on traffic sources that exhibit the same characteristics as that bot, leading to a feedback loop of wasted spend.

SnipeSearch AdClicks, by contrast, operates with a more direct correlation between the search query and the ad placement. In the corporate services niche, where the intent is highly specific (e.g., “BVI company formation with banking”), SnipeSearch’s focus on verified human traffic through stricter edge-level filtering ensures that the advertiser is not competing against automated “noise”.

The Search Partner Network Vulnerability

A notable share of the £298 spent through Google Ads appears to have been absorbed by the Search Partner Network (SPN) (despite being disabled). While Google has introduced suitability changes, including the announced removal of parked domains in 2026, many third-party networks still operate with varying degrees of transparency, which in some cases can reduce visibility into exact placement and user context. In higher-cost sectors such as finance, legal, and corporate services, this can still create exposure to inefficient spend through low-intent impressions, accidental clicks, and broader attribution noise.

By contrast, the SnipeSearch AdClicks ecosystem is designed around a more controlled and verified supply chain. Although it does operate a Search Partner Network, access is gated through strict domain and subdomain-level vetting, where each property must be individually approved and issued a unique key before it can serve ads. This structure prevents open registration of parked domains and makes it significantly harder for low-quality or templated “made-for-advertising” sites to participate at scale.

Additional safeguards further reinforce inventory quality. Subdomains are also uniquely keyed, which blocks hidden MFA-style deployments and prevents unverified replication of ad-serving environments. In parallel, ad load is capped so that if placements exceed a small number of instances per page (typically more than three), additional ads are not served, reducing clutter and limiting artificial inventory inflation.

Monetisation integrity is also enforced at the delivery layer. Pages that fail to meet baseline content requirements, including those with minimal or non-substantive content, are not eligible for monetised ad serving. This ensures that even lower-traffic publishers must maintain real, indexable content in order to participate in the network, reducing the risk of thin or spam-like inventory entering circulation.

As a result, SnipeSearch traffic is generated through a more tightly governed mix of owned surfaces and vetted partner domains, rather than open, self-serve third-party expansion.

Because these environments are either platform-controlled or individually approved, placements are more transparent and easier to audit, and traffic quality can be assessed at the domain level rather than inferred at a network level.

Users engaging across these ecosystems also tend to exhibit more intentional browsing behaviour, with lower ad fatigue and higher discovery intent, particularly when interacting with niche or independent publishers.

The result is not simply lower-cost traffic, it is traffic sourced through a constrained, quality-gated ecosystem designed to prioritise measurable engagement over unrestricted volume.

The Two-Tier Strategy: Why Consistent Traffic Requires Diversification

The success of the SnipeSearch campaign does not suggest that businesses should abandon Google. Rather, it highlights the necessity of a balanced, two-tier acquisition strategy. Dependence on a single platform increases exposure to policy shifts, algorithm updates, or sudden cost spikes.

Tier 1: The “Reach” Tier (Google Ads)

Google Ads remains the most powerful tool for capturing existing demand at scale. Its massive infrastructure allows for a “water hose” approach, turning traffic on or off instantly to meet volume requirements. Google is essential for “Branded Search” and “Retargeting,” ensuring that prospective clients who have already interacted with the brand are nurtured through the funnel.

Tier 2: The “Efficiency” Tier (SnipeSearch AdClicks)

SnipeSearch acts as the precision layer. It provides the “rain”, steady, high-quality traffic that builds the top of the funnel at a fraction of the cost. This tier is crucial for:

- Cost Control: Lower CPCs from SnipeSearch effectively subsidize the more expensive conversion-focused campaigns on Google.

- Quality Verification: Running SnipeSearch in parallel provides a “control group” to verify where genuine business demand is strongest.

- Resilience: Having a second, functional traffic source ensures that a technical issue or account limitation on one network does not result in a total cessation of leads.

The Synergy of the Model

The “water hose versus rain” analogy is appropriate here. While a hose (Google) can fill a bucket quickly, the rain (SnipeSearch) ensures the ground remains saturated over the long term without the same intensity of resource consumption. For the corporate services client, this meant that while Google provided the bulk of the raw “noise,” SnipeSearch provided the “signal” that actually aligned with their offshore jurisdictions and service expertise in BVI, Seychelles, and the UAE.

Advanced Behavioral Analysis and the Future of Search

The 2026 advertising landscape is increasingly shaped by the rise of automation in how information is collected and processed. In particular, the growth of AI-assisted browsing and automated agents is changing how digital interactions are interpreted and measured across the broader web ecosystem.

However, it is important to clarify SnipeSearch’s position directly: SnipeSearch does not use AI for search ranking, does not employ agentic AI systems, and has no plans to introduce agentic AI into its core search infrastructure. Any statements suggesting otherwise are incorrect. SnipeSearch is fundamentally a rules-based search system, focused on deterministic retrieval and transparent ranking. Certain optional features may present AI-generated summaries after organic results, but these are strictly supplementary and not part of the search or ranking process.

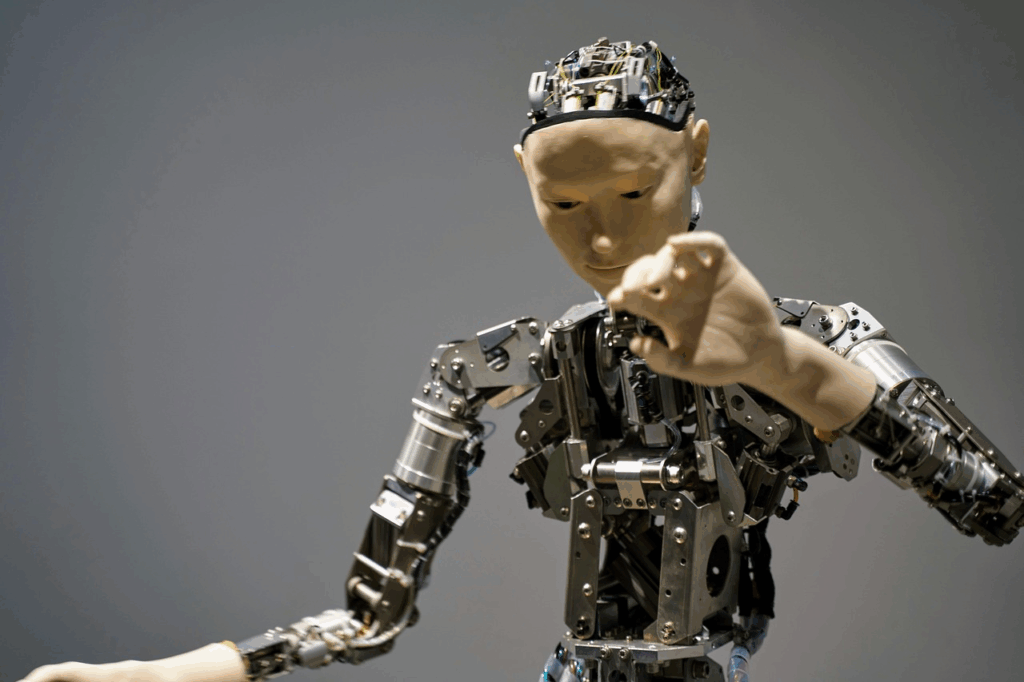

Agentic Browsing and Its Impact on Digital Advertising

Industry leaders, including Cloudflare CEO Matthew Prince, have highlighted the growing proportion of automated traffic on the internet and the increasing role of AI systems that retrieve and summarise information on behalf of users. In such environments, traditional assumptions around the “click” as the primary unit of intent are being re-evaluated.

As AI systems increasingly mediate how users consume content, advertisers and platforms are adapting toward measuring intent signals beyond simple click-through behaviour. This includes evaluating content structure, clarity, and machine readability so that information can be reliably interpreted and surfaced by downstream systems.

Importantly, SnipeSearch remains outside this paradigm of agent-driven search. It does not optimise for AI intermediaries, nor does it rely on automated reasoning systems to determine ranking or retrieval. Its focus remains on direct user queries and explicit interaction.

Behavioral Integrity and Traffic Quality Signals

To maintain integrity across advertising systems, SnipeSearch incorporates a range of anti-fraud and quality assurance mechanisms designed to identify non-human or low-quality interactions. These include established industry techniques such as:

- Interaction pattern analysis (e.g., abnormal click timing or repeated uniform behaviour)

- Session consistency checks (detecting unrealistic navigation sequences)

- Device and network anomaly detection (identifying emulated or rapidly rotating identifiers)

- Engagement validation signals (distinguishing immediate, non-deliberative actions from sustained user behaviour)

These systems are intended to preserve the quality of the advertising environment by ensuring that billing and attribution are based on meaningful interactions rather than automated or invalid activity.

Rebalancing Toward Transparent Ecosystems

The broader shift in advertising strategy reflects a preference for environments where traffic quality can be more directly observed and validated at the source. In this context, SnipeSearch’s ecosystem combines owned surfaces with a tightly vetted partner network, prioritising transparency, content legitimacy, and measurable engagement.

Strategic Implementation: A Roadmap for High-Value Brands

For businesses in the financial consulting, offshore formation, or insurance sectors, the March–April 2026 data provides a clear path forward.

Phase 1: The Audit and Exclusion Stage

The first step is a rigorous audit of existing Google Ads campaigns. Advertisers must move beyond the default “Presence or Interest” setting and explicitly target “Presence Only” to eliminate curiosity-driven clicks from non-target jurisdictions. Additionally, the use of international location exclusions acts as a safety redundancy to prevent spend leakage into high-fraud regions.

Phase 2: Implementation of the “SnipeSearch Tier”

Integrating SnipeSearch AdClicks should be approached as a “precision scaling” move. By allocating 30–50% of the acquisition budget to SnipeSearch, brands can capture high-intent human traffic in competitive Tier 1 markets like the US, UK, and UAE without the premium “tax” associated with the Google ecosystem.

Phase 3: The Integration of Forensic Analytics

No modern ad campaign can succeed without an independent “source of truth.” Relying solely on the ad platform’s internal reporting is a conflict of interest; platforms are incentivized to count clicks as valid to maximize revenue. Implementing Statcounter for path analysis and Cloudflare for edge-level bot mitigation ensures that the advertiser, not the botnet, remains in control of the budget.

Final Conclusions

The findings of the March–April 2026 campaign should be understood as a single, time-bound case study rather than a generalised or predictive outcome. While the results provide useful insight into comparative traffic quality and channel behaviour, they do not indicate a structural replacement of any existing advertising platform.

It is important to state clearly that SnipeSearch AdClicks is not positioned as a replacement for Google Ads, and no recommendation is made to discontinue or reduce reliance on Google as a primary acquisition channel. In practice, users are consistently encouraged to maintain diversified marketing strategies, including continued use of established platforms such as Google for scale and reach.

This specific test originated from a practical optimisation exercise: the objective was to help reduce inefficient spend in a client’s existing Google Ads budget (approximately £1,000/month), which was heavily concentrated in lower-value or high-noise traffic segments. The intent was to extend budget efficiency, reduce exposure to low-quality clicks, and explore whether a secondary channel could contribute a small percentage of higher-intent traffic. Any observed shift in contribution was incidental to this optimisation process rather than the primary goal.

To ensure transparency and reduce interpretive bias, multiple independent AI systems, including ChatGPT, Gemini, and Claude — were granted access to the underlying datasets, including Google Ads performance data, SnipeSearch AdClicks logs, Cloudflare analytics, Statcounter data, and internal tracking outputs. These models were used to independently review the datasets and assess the consistency and clarity of the reporting. Their involvement was strictly observational and verification-based; no model was used to generate or influence the underlying traffic data.

Across all systems, the conclusion remains consistent: this is a single-case evaluation under specific conditions, not a repeatable or guaranteed performance benchmark. It does not constitute evidence of systemic superiority, nor should it be interpreted as a claim that one platform can universally replace another.

The appropriate interpretation of this work is therefore methodological rather than promotional. It demonstrates how different traffic ecosystems behave under controlled measurement, and how budget allocation can be informed by segmentation, intent quality, and fraud resistance signals. However, outcomes will vary significantly across industries, geographies, campaign structures, and time periods.

As such, no broad claims are made regarding universal performance or scalability. Future work will continue to expand the dataset through additional case studies in order to identify potential patterns over time, with a focus on reproducibility, transparency, and controlled comparison.

Looking ahead, the emphasis in digital acquisition is expected to shift further toward trust, measurement integrity, and channel diversification. SnipeSearch AdClicks represents one component within that broader ecosystem, not a replacement for incumbent platforms, but an additional layer in a multi-channel strategy designed to improve visibility into traffic quality.