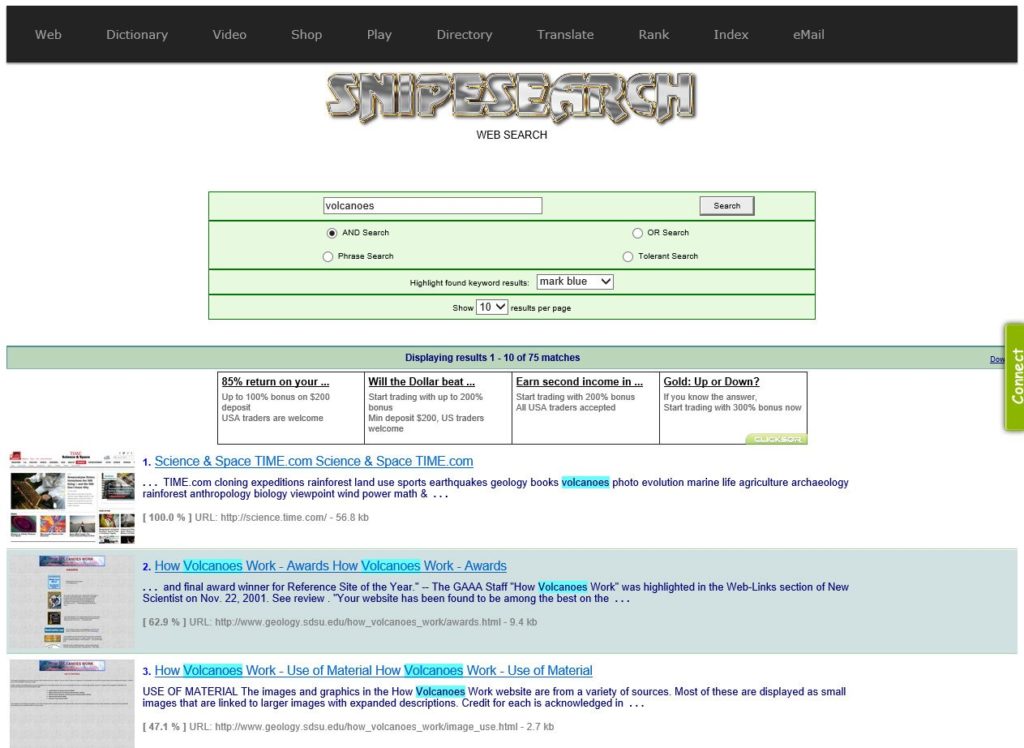

Previously on SnipeSearch: The Road to Sovereignty

SnipeSearch’s evolution is a history of escaping technical dependency. What began in 2005 as a residential project in Luton, eventually hit a “bandwidth and finace wall” during its 2009–2011 rollout with an external firm called Orbit.

The Orbit Meta System became a strategic bottleneck: a $349/month setup throttled by a restrictive 5GB data cap and a mere 320GB of storage. As hosting costs spiraled and a domain ownership crisis lost the team snipesearch.com, the mission shifted from simple search to absolute technical sovereignty.

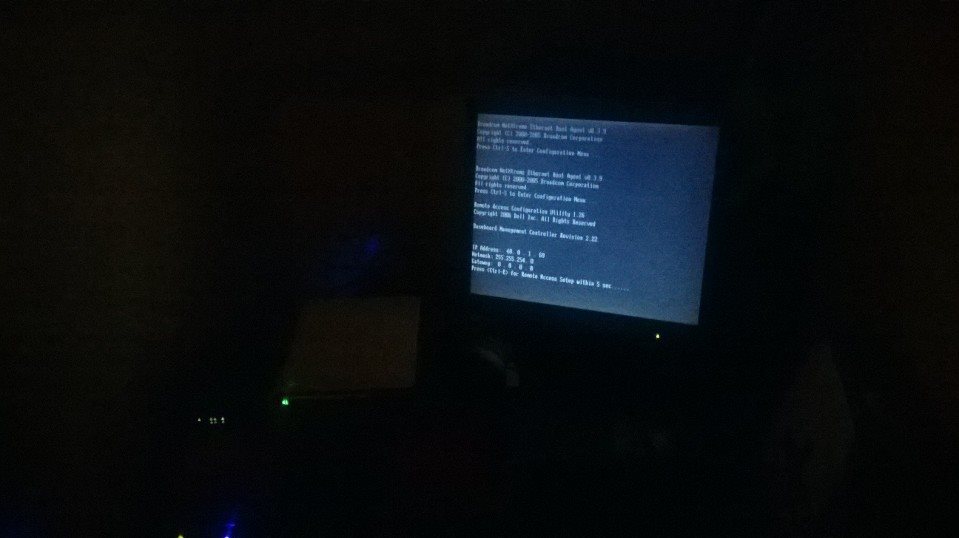

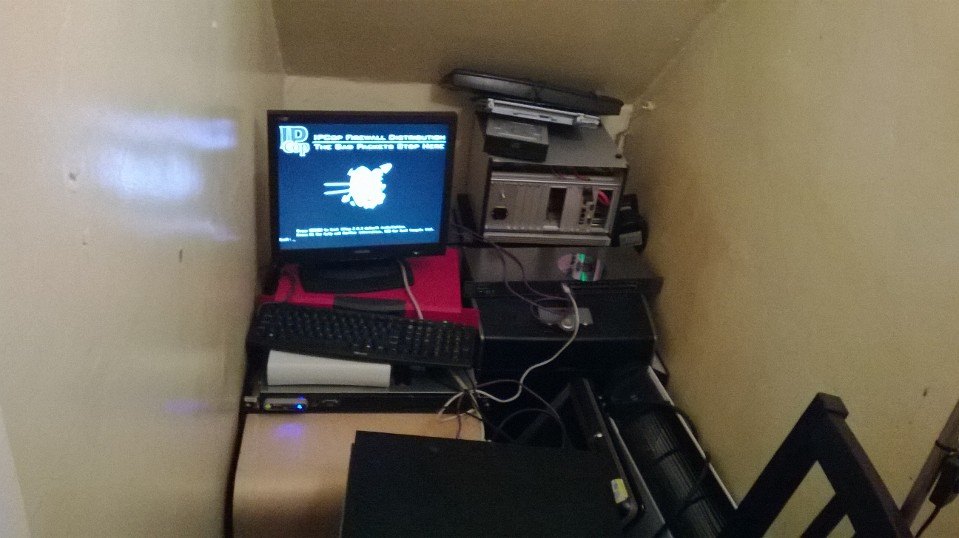

The goal was clear: build a “Zero-Trust” architecture where every stack component, from the hardware under the stairs to the ISP providing the pipe, was under direct internal control.

The 2013 Infrastructure Leap: Going All Out

By 2013, SnipeSearch traded opaque third-party rentals for a self-hosted enterprise cluster. While initial plans suggested 500GB drives, the “Digital State” actually launched with high-performance 2TB SSHD and SAS drives. This technical “overkill” provided a ~50x increase in storage over the Orbit era, fueling a high-velocity engine capable of spidering 10 million pages per month.

| Metric | Orbit Meta System (Previous) | 2013 Self-Hosted Cluster | Total Increase |

| Processing Cores | 8 Cores (Single Xeon) | 26 Cores (24 Xeon + 2 Opteron) | ~3.25x |

| Total RAM | 32 GB | 176 GB | ~5.5x |

| Storage Capacity | 320 GB | ~16 TB (Estimated) | ~50x |

| Bandwidth | 5GB Cap (Restricted) | 50Mbps Dedicated ISP | 16.2 TB |

Network Capacity and Search Volume

The transition from a restricted 5GB monthly data cap to a dedicated 50Mbps business line fundamentally unlocked the engine’s user capacity. Assuming an average page load of 40KB for search results, the infrastructure jump allowed for a theoretical increase in monthly search volume of over 300,000%:

- Orbit Era (5GB Cap): Restricted to approximately 131,072 searches per month before hitting the bandwidth wall.

- Self-Hosted Era (50Mbps Connection): A dedicated 50Mbps line for clients could theoretically support approximately 405,000,000 searches per month.

Software Sovereignty: Sphider Plus and Database Architectural Inspiration

2013 was the moment the “Digital State” moved from theory into physical iron. It was a transition characterized by a total rejection of technical dependency. While the external world saw a search engine, the internal reality was the construction of a high-performance cluster designed to outpace Silicon Valley from a residential “under the stairs” command center.

The Hardware Pivot: Hybrid-Flash and “Tier 0” Storage

The procurement of the enterprise cluster in March 2013 marked the end of the Orbit era. While it was planned to go with standard 500GB drives (due to their inclussion with the preowned servers purchased), we went with the 2TB SSHDs.

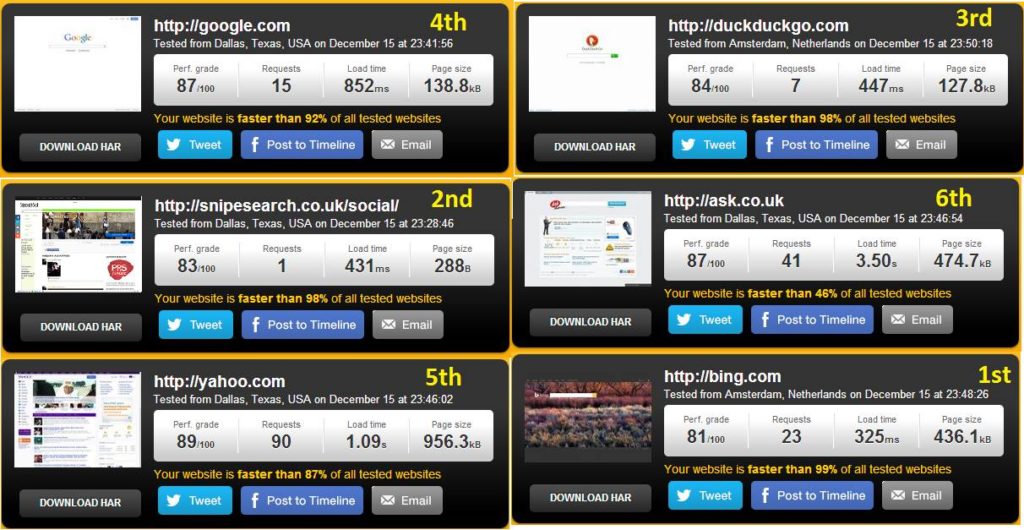

This was more than a storage upgrade; it was the implementation of a “Tier 0” storage architecture. By utilizing the 8GB of NAND flash on these cutting-edge 2013 SSHDs to cache high-frequency search signals, SnipeSearch achieved a 431ms load time. This empirical success, recorded by Pingdom on December 16, 2013, demonstrated that the sovereign cluster was twice as fast as Google (852ms), despite running on a residential-grade connection, and the test was run on one of the more complex social media pages not the simple landing page, the this was due to the aim of having a consistently tiny load time to minimize how many simultaneous connections were genuinely occurring, fast in and fast out. .

Software Sovereignty: The Sphider Plus 2.9 Revolution

On April 8, 2013, the software stack was revolutionized with the deployment of Sphider Plus 2.9. This wasn’t merely a crawler update; it was a multi-lingual, multi-format engine that transformed SnipeSearch into a global discovery platform.

- Global Language Support: Version 2.9 introduced critical compatibility for Internationalized Domain Names (IDNs) and non-ASCII URLs. For the first time, the index could ingest the web in Cyrillic, Chinese, Arabic, and Greek, removing the Latin-alphabet barrier.

- Deep Document Indexing: The engine expanded beyond HTML to “see” inside the modern office. Integrated converters allowed the crawler to strip and index data from PDFs, DOCX, XLSX, and PPTX, as well as legacy DOC and XLS files.

- Multimedia Intelligence: The system began extracting rich metadata, including EXIF data from images, ID3 tags from audio, and specialized video metadata, turning the search results into a high-context library of the digital world.

The “Database Sharing” Logic and Modular Scaling

To manage the velocity of 10 million pages per month, SnipeSearch implemented a modular “database sharing” logic. Rather than a single, monolithic database that would eventually collapse under its own weight, the system supported up to five separate databases with unlimited table sets.

This allowed the “Digital State” to partition content into logical categories, such as /store or /research, dramatically reducing indexing times and ensuring that a failure in one sector would not compromise the entire ecosystem.

You can still preview this feature as it once was on snipesearch indie

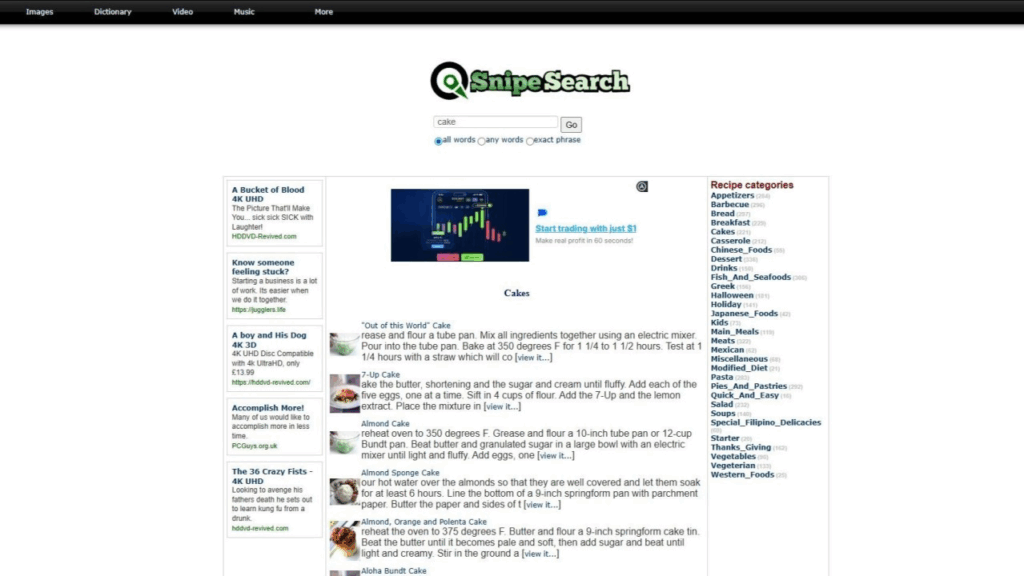

The Visual Revolution: Results Preview (May 2013)

On May 09th 2013, SnipeSearch pioneered a massive leap in user experience by launching the “Results Preview” feature. By displaying a live thumbnail of the destination page to the left of each search result, SnipeSearch provided users with a “Visual Firewall.” This allowed for an immediate assessment of a website’s quality, layout, and legitimacy before a single click was ever made. In an era where “clickbait” and malicious redirects were becoming common, this feature offered a layer of transparency and safety that text-only snippets simply could not provide.

What makes this milestone particularly significant is how far SnipeSearch was ahead of its industry-leading competitors. Google did not experiment with similar visual previews until much later, often relegating them to small hover icons or specific mobile interfaces, and only consistently integrated a comparable “Side Panel” preview feature around 2022. By implementing this in early 2013, SnipeSearch was nearly a decade ahead of the curve, proving that its “Under the Stairs” cluster had the processing power to generate and serve visual data that Silicon Valley was still treating as a luxury.

From a technical standpoint, the Results Preview was a “stress test” for the newly ignited sovereign cluster. Generating these thumbnails in real-time or from a frequently updated cache required significant overhead, which would have been impossible under the 5GB bandwidth cap of the old Orbit system. This feature served as the functional debut of SnipeSearch’s new high-velocity infrastructure, demonstrating that the move to self-hosting wasn’t just about saving money—it was about enabling premium features that the mainstream web wasn’t yet ready to support.

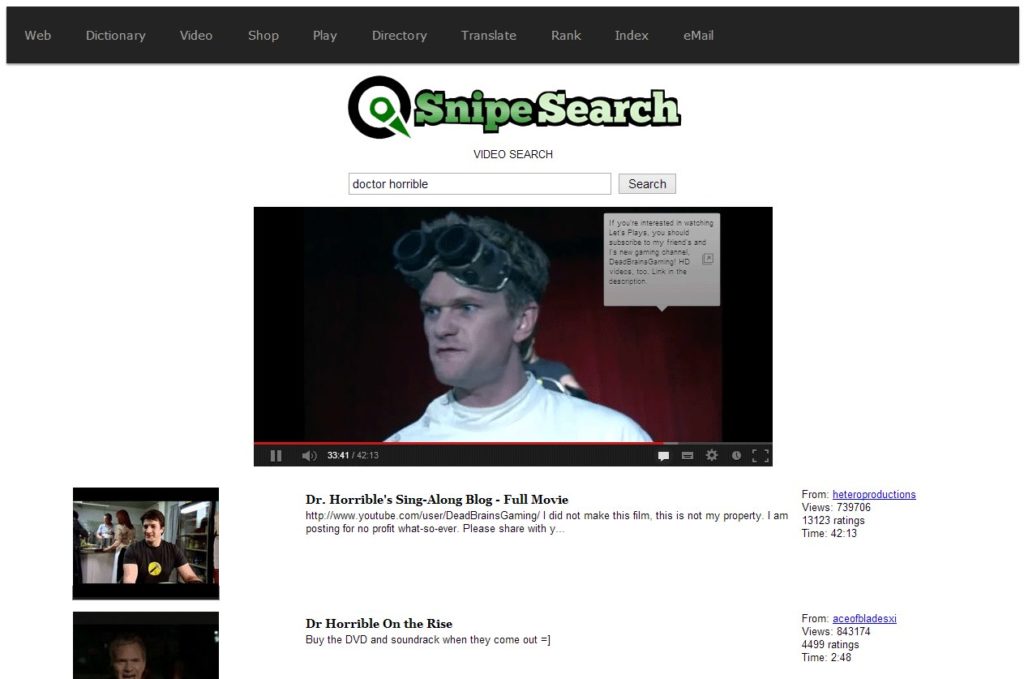

The 2013 Brand Refresh: Multimedia & Identity (May 11, 2013)

On May 11, 2013, SnipeSearch debuted its new visual identity, rolling out a streamlined logo that signaled the transition from a technical experiment to a professional “Digital State.” This refresh was launched alongside a major hardening of the SnipeSearch Video vertical. Far more than a simple video host, the service acted as a powerful multimedia aggregator, pulling high-fidelity content from across the major video platforms of the era, including YouTube.

The cluster was the engine behind this integration. It had to manage simultaneous API requests and cache metadata from multiple external sources to ensure a seamless user experience. The power of this infrastructure was best demonstrated by the platform’s ability to serve and display cult-classic content like Dr. Horrible’s Sing-Along Blog with “epic” playback quality. By utilizing the cluster’s Tier-0 SSHD storage to manage the rapid-fire indexing of video results, SnipeSearch delivered a unified, high-definition viewing interface that rivaled dedicated streaming services.

This dual-launch proved that SnipeSearch was becoming a comprehensive media hub. By successfully aggregating and serving data-heavy video content from across the web into a single sovereign interface, the project demonstrated Full-Stack Sovereignty. It proved that the cluster could manage complex external API traffic and heavy multimedia throughput simultaneously, all while maintaining the “Zero-Trust” privacy that the mainstream platforms were beginning to abandon.

The .CC Crisis and the Free Web Commitment

While building this stack, a strategic threat emerged. In July 2011, Google “de-indexed” over 11 million sites in the .co.cc space, a move that indirectly crippled providers like usa.cc. For SnipeSearch, which operated snipesearch.usa.cc at the time, this was a moment of clarity.

Because SnipeSearch had built its own sovereign index, it did not need to be “discoverable” by Google; it was the discovery engine. This event solidified the commitment to providing Free Hosting and SLDs (Second-Level Domains). The project determined that if tech monopolies were going to kill the free web to empower premium domain squatters, SnipeSearch would provide the sovereign infrastructure, hosting, search, and a dedicated ISP, to ensure small businesses remained un-deletable.

Hardened Security and Selective Crawling

The pivot of 2013 was defined by the transition from theory into physical iron. As SnipeSearch moved toward high-velocity indexing, swallowing 10 million pages a month, it became a high-value target. To protect the project, a multi-layered, enterprise-grade security stack was deployed under the stairs, transforming a residential setup into a fortified data center.

Layered Perimeter Defense: The Iron Gates

In 2013, the project had not yet transitioned to the PCGuys business infrastructure. Security began at the edge of a residential network, where the team had to manage massive bandwidth for simultaneous crawling and user access using two ADSL connections. This traffic was policed by a professional-grade hardware chain:

- The Cisco Gateway: A Cisco 1941 Integrated Services Router was hardwired directly to the modems, serving as the primary gatekeeper for inbound and outbound traffic flows.

- The “Red Box” Sentinel: Following the Cisco router was a WatchGuard Firebox 500 (F2064N) rack-mount firewall. This dedicated VPN/Firewall appliance provided the heavy-duty packet inspection and network-level encryption necessary to safeguard the cluster from external intrusion.

- IPCop 2.0: Deepening the perimeter, a specialized “mini-server”, running 4 Xeon cores and 8GB of RAM, was dedicated solely to the IPCop 2.0 firewall distribution. This acted as a secondary internal barrier, ensuring that the “Spoke 1” cloud remained invisible to the public internet except through tightly controlled ports.

System-Level Hardening and Real-Time Surveillance

Inside the servers, the defense-in-depth strategy continued across the 26-core cluster.

- CSF (ConfigServer Security & Firewall): Every blade ran CSF, a stateful packet inspection (SPI) firewall and login failure daemon. This allowed the system to automatically block IP addresses exhibiting suspicious behavior, essential for maintaining stability when traffic spiked to 16,000 active users in a single day.

- The Dual-Engine Malware Guard: To ensure the index remained clean while crawling and receiving user uploads, SnipeSearch employed a tag-team antivirus strategy. Clamd (the ClamAV daemon) was constantly active, providing real-time file monitoring.

- Sequential Upload Protection: Once Clamd finished a check on an upload, AVG for Linux was immediately triggered to scan the file again, providing an additional layer of Windows-level protection for the end-user.

- Automated Segmented Scans: To maintain peak performance during the 2013 growth, AVG was configured via Cron jobs to perform “Segmented Scans” during low-traffic periods. Every 24 hours, the system would scan 20% of the entire file system, ensuring a full-depth scrub of the Digital State’s storage every five days without taxing the CPU during peak indexing hours.

Sovereign Efficiency: Selective Indexing

Security in 2013 was also defined by operational efficiency. To prevent the system from being overwhelmed by “digital noise” or DDoS-like traffic patterns during crawls, the Sphider Plus 2.9 logic implemented:

- Div-Based Indexing: By utilizing

divs_use.txt, the crawler could ignore navigation menus, footers, and sidebars. It targeted only the core data within specific<div>tags, ensuring that processing power was spent on high-value intelligence rather than redundant code. - Intrusion Detection (IDS): The stack integrated a custom IDS designed to protect against XSRF (Cross-Site Request Forgery) and Session-Fixation attacks, maintaining the “Zero-Trust” integrity of the SnipeSocial logins and user data.

The 2013 Security Verdict: By combining a Cisco 1941, a WatchGuard Firebox, and IPCop with system-level CSF and a sophisticated Clamd/AVG dual-scanning routine, SnipeSearch built a fortress on residential dual-ADSL lines. This multi-layered approach ensured that while the engine was aggressively expanding its global index, the Digital State remained impenetrable to both network intrusions and file-level threats.

March 10, 2014: The “What’s New on the Net” Landmark

In the midst of this technical bottleneck, SnipeSearch received its first major external validation. On March 10, 2014, the influential technology platform “What’s New on the Net” published a review that would define the project’s reputation for years to come.

The review was a milestone: it explicitly praised SnipeSearch for superior performance compared to Google, highlighting the engine’s ability to deliver accurate, relevant results without user tracking. The review noted:

“Snipesearch is a unique venture in the world of digital search engines… notably highlighting our search engine’s ability to deliver accurate and relevant results without tracking users.”

This wasn’t just praise; it was empirical proof that the sovereign “Under the Stairs” cluster was outperforming Silicon Valley giants in both speed and privacy. This recognition provided the final momentum needed to break the bandwidth chains and achieve total independence.

Network Sovereignty: The PCGUYS ISP Transition (2014)

By early 2014, the “Under the Stairs” cluster was physically complete, but it was suffocating. The 26-core sovereign cluster and its 16TB storage array had outgrown the standard British Telecom (BT) residential lines. As indexing velocity stabilized at 10 million pages per month, the high-frequency packet requests and massive data ingestion began to trigger domestic throttling and instability.

The Mathematics of the Bottleneck

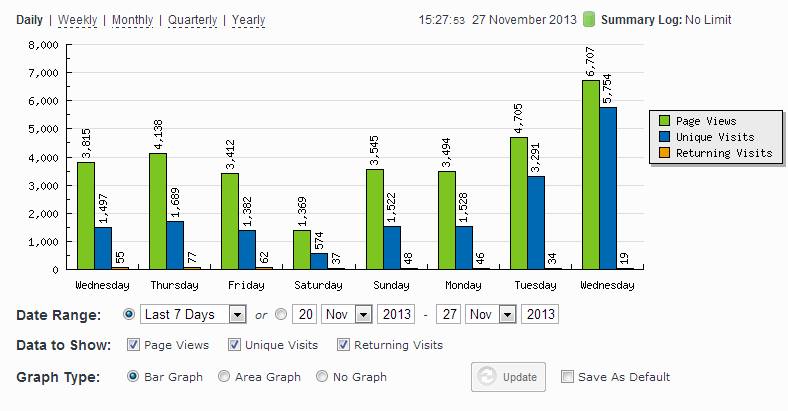

The need for the transition was driven by a non-linear explosion in user behavior. In November 2013, the platform averaged 7,000 searches per day; by the end of December, that number had surged to 30,000 searches per day.

The technical limitations of ADSL2+ became a mathematical wall:

The “Burst” Reality: Real-world traffic is never linear. During peak periods, the cluster saw up to 300 searches per second. While the crawler was ingesting a consistent 3.86 pages every second, the domestic ADSL pipes could not handle the 500+ “half-open” connections required to serve users simultaneously without timing out.

The Linear Capacity: At an average load time of 4 seconds per search, the connection could theoretically handle 22,000 searches a day only if users arrived in a perfectly spaced, linear fashion.

The Birth of a Sovereign Carrier

To resolve this bottleneck, the organization didn’t just upgrade a plan, it changed its status in the eyes of the telecommunications industry. While operating as a sole trader, Stephen Driver formally registered as an Internet Service Provider (ISP) under the name PCGUYS. This strategic move allowed the project to bypass residential consumer protections (and their associated limitations) to secure enterprise-grade infrastructure.

Infrastructure Ignition: April 29, 2014

On April 29, 2014, the Borehamwood residence underwent a massive technological leap. The cluster was switched from domestic ADSL to dual 50Mbps business connections (FTTC).

This provided the “Digital State” with several mission-critical upgrades:

- Fixed IP Stability: For the first time, the “Spoke 1” Ignition had the permanent digital address required for a reliable global cloud, resolving the previous DNS instability seen during the

.usa.ccera. - Load Balancing & Redundancy: Utilizing the Cisco 1941 router and the WatchGuard Firebox, the two lines were bonded to allow simultaneous high-velocity crawling on one pipe while maintaining low-latency user access on the other.

- Symmetric Throughput: The move to business-grade fiber significantly improved the upload speeds required for the engine to serve search results and multimedia content to its 16,000+ daily users without lag.

July 3, 2014: The Algorithmic “Nuclear” Update

With the 100Mbps capacity finally live, SnipeSearch unleashed its most significant software update to date on July 3, 2014.

Before this, the index was limited by the “skinny pipes” of residential internet. With the PCGUYS infrastructure live, the algorithm was updated to integrate results from a diverse array of global sources. This update transitioned SnipeSearch from a limited dataset to a comprehensive global powerhouse, intelligently ranking information to match the “Google-beating” speed identified in the March review.

The Modern Legacy

This transition marked the final brick in the wall of total technical independence. By owning the ISP, the hardware, and the search logic, SnipeSearch achieved Full-Stack Sovereignty. No third party, whether a hosting provider like Orbit or a telecom giant, could pull the plug on the index.

Today, PCGUYS is no longer just a tactical registration; it is a formal property of Snipe Group Limited, standing as a testament to the era when SnipeSearch built its own private internet to protect the free web.

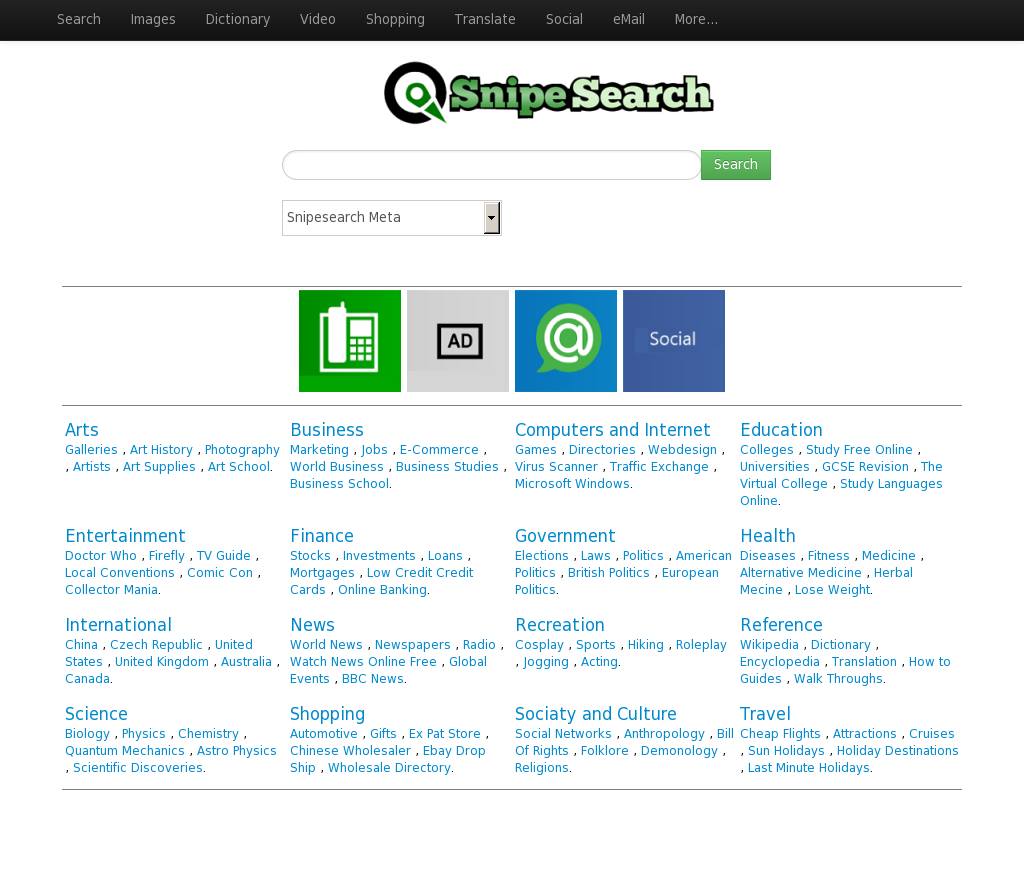

Functional Diversification (2013–2015)

The stability achieved by the 26-core “Under the Stairs” cluster wasn’t just a technical flex; it was the engine that powered a rapid-fire rollout of specialized services. By shifting away from the Orbit Meta System, SnipeSearch gained the architectural freedom to launch modular sub-domains, each operating as a distinct “spoke” within the sovereign cloud.

2013: The Year of Multi-Vertical Growth

With the 2TB SSHD and SAS drives providing massive headroom, the summer of 2013 became a period of intense functional expansion.

- June 14, 2013 (Video Search): SnipeSearch broke beyond text-based indexing by integrating a specialized Video Search module. This utilized advanced APIs (including YouTube and Yahoo) to provide a centralized multimedia discovery hub, cached and served with the 431ms speed enabled by the cluster’s hybrid-flash storage.

- June 15, 2013 (The Sovereign Dictionary): One day later, dictionary.snipesearch.co.uk went live. This was a massive linguistic database featuring over 100,000 words, designed to provide instant definitions without relying on external third-party redirects.

- November 13, 2013 (SnipeSocial Genesis): Marking the transition from a search engine to a community platform, internal social networking began. This precursor to the V5 and V6 maps allowed for a “Zero-Trust” environment where users could communicate within the sovereign infrastructure.

Late 2013: The Recipe Vertical and the Return of SES

The launch of recipe.snipesearch.co.uk added a specialized layer to the “Digital State.” This vertical featured a pre-loaded database of 20,000 recipes, but its technical implementation differed from the main index.

While the global search was powered by Sphider Plus 2.9, the Recipe vertical utilized Search Engine Studio (SES), the same software used in the original 2005 “Mum Knows” launch on the Compaq ML570.

- The Logic of Scale: The team recognized that while SES “sucked at scale” for crawling the entire web, it was unmatched for single-site, localized indexing.

- Hyper-Speed Vertical Search: By pointing SES at a fixed, internal 20,000-recipe database, SnipeSearch achieved near-instantaneous query returns. It functioned like a “site search” on steroids, allowing users to filter through thousands of culinary data points without the overhead of the global crawler’s multi-threaded logic.

- Modular Integration: This proved the versatility of the cluster, running Sphider Plus for the world, SES for specialized verticals, and IPCop/WatchGuard for the perimeter simultaneously.

2014–2015: Algorithmic Maturity

Once the hardware was “Ignited” in January 2014 and the PCGUYS ISP was active, the focus shifted from adding features to refining intelligence.

- The Signal Integration Update: Between 2014 and 2015, the platform underwent major algorithmic overhauls. The goal was to establish complex relationships between varied search signals, moving from simple keyword matching to a sophisticated understanding of how diverse external sources interacted.

- Cross-Domain Intelligence: The engine began to synthesize data across its own verticals. A search for a specific term could now trigger related results from the News, Video, Recipe, and Dictionary modules simultaneously, creating a unified knowledge graph that mirrored the “Spoke” architecture of the hardware itself.

Why This Mattered: The “Spoke” Philosophy

This wasn’t just a collection of websites; it was a decentralized ecosystem. By using separate databases for each vertical (as enabled by Sphider Plus 2.9), SnipeSearch ensured that a traffic surge on the Video Search module wouldn’t slow down the main Web index. This modular approach served as the direct inspiration for the project’s future proprietary code and the eventual creation of the Index Mk 4 V6 Map in 2017.